Craig Federighi admits that Apple could have handled CSAM detection features better

- Craig says that it’s not possible for your personal images to get flagged as CSAM.

- Also explains how it’s not a backdoor.

An exclusive interview with Craig Federighi (Senior Vice President of Software Engineering at Apple) and Joanna Stern from The Wall Street Journal reveals that he “wished that this had come out more clearly for everyone.”

In the interview, he admitted the mass misunderstanding of the new child sexual abuse material (CSAM) detection feature in iCloud Photos and the inappropriate image detection for iMessage.

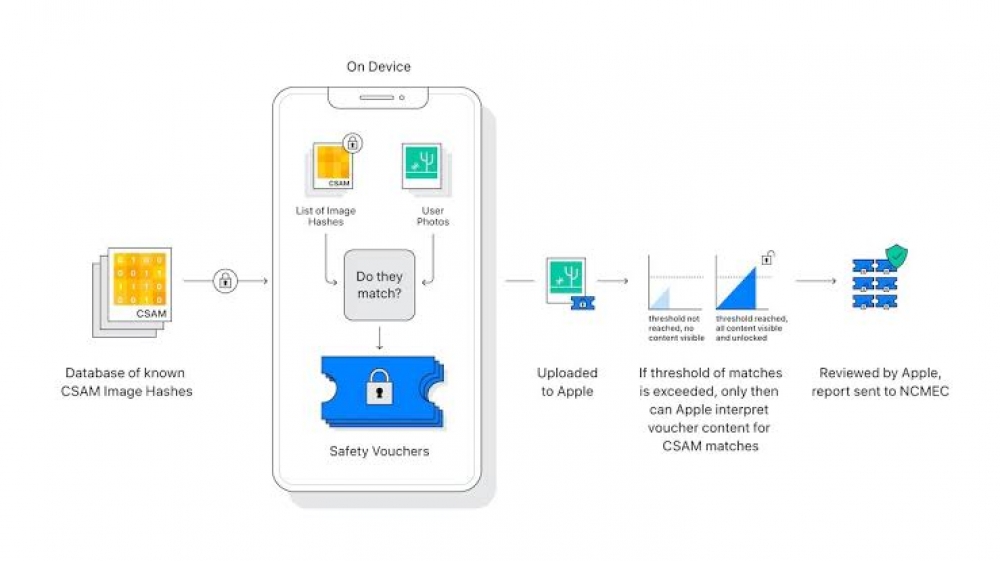

He elaborated on the CSAM detection feature, stating that the feature is private and secure, with the iPhone only alerting Apple if your account has “something on the order of 30 known child phonographic images matching”, and if a human moderator validates that the images are indeed CSAM. Only at that point does Apple alert the corresponding authorities.

He elaborated on the CSAM detection feature, stating that the feature is private and secure, with the iPhone only alerting Apple if your account has “something on the order of 30 known child phonographic images matching”, and if a human moderator validates that the images are indeed CSAM. Only at that point does Apple alert the corresponding authorities.

Thanks to the feature using cryptographic hashes of images that are in a database of images from the National Center for Missing & Exploited Children, it is impossible for your own images of say, your naked children taking a bath, to get reported to the authorities by Apple.

In response to accusations of Apple creating a backdoor into their operating systems by people such as whistleblower Edward Snowden, Federighi said “I think in no way is this a backdoor. I don’t understand — I really don’t understand that characterization.” Stating that Apple ships the same database in China as the U.S, or Europe, and that they would refuse if an government tried to add any other images to the database.

One thing for sure is that Apple is going to have to work hard to build back it’s reputation as a privacy-respecting tech company, a stark contrast to tech giants like Google.

One thing for sure is that Apple is going to have to work hard to build back it’s reputation as a privacy-respecting tech company, a stark contrast to tech giants like Google.

The original interview can be viewed here.

Recommended by the editors:

Thank you for visiting Apple Scoop! As a dedicated independent news organization, we strive to deliver the latest updates and in-depth journalism on everything Apple. Have insights or thoughts to share? Drop a comment below—our team actively engages with and responds to our community. Return to the home page.Published to Apple Scoop on 13th August, 2021.

No password required

A confirmation request will be delivered to the email address you provide. Once confirmed, your comment will be published. It's as simple as two clicks.

Your email address will not be published publicly. Additionally, we will not send you marketing emails unless you opt-in.